The bottleneck isn’t data. It’s interpretation.

At most small institutions, the problem is not that IR can’t produce data. The problem is that nobody has time to think about what it means – including the IR director producing it.

I run a three-person IR office at a Lasallian Catholic university in the Bronx. We produce enrollment reports, retention analyses, IPEDS submissions, graduate outcome surveys, and competitive intelligence briefs. The data exists. It is reasonably clean. It is often delivered on time. And more often than I would like to admit, I walk into a cabinet meeting having produced the numbers without having fully thought through what they are telling me. Production pressure crowds out interpretation. The report ships. The judgment lags.

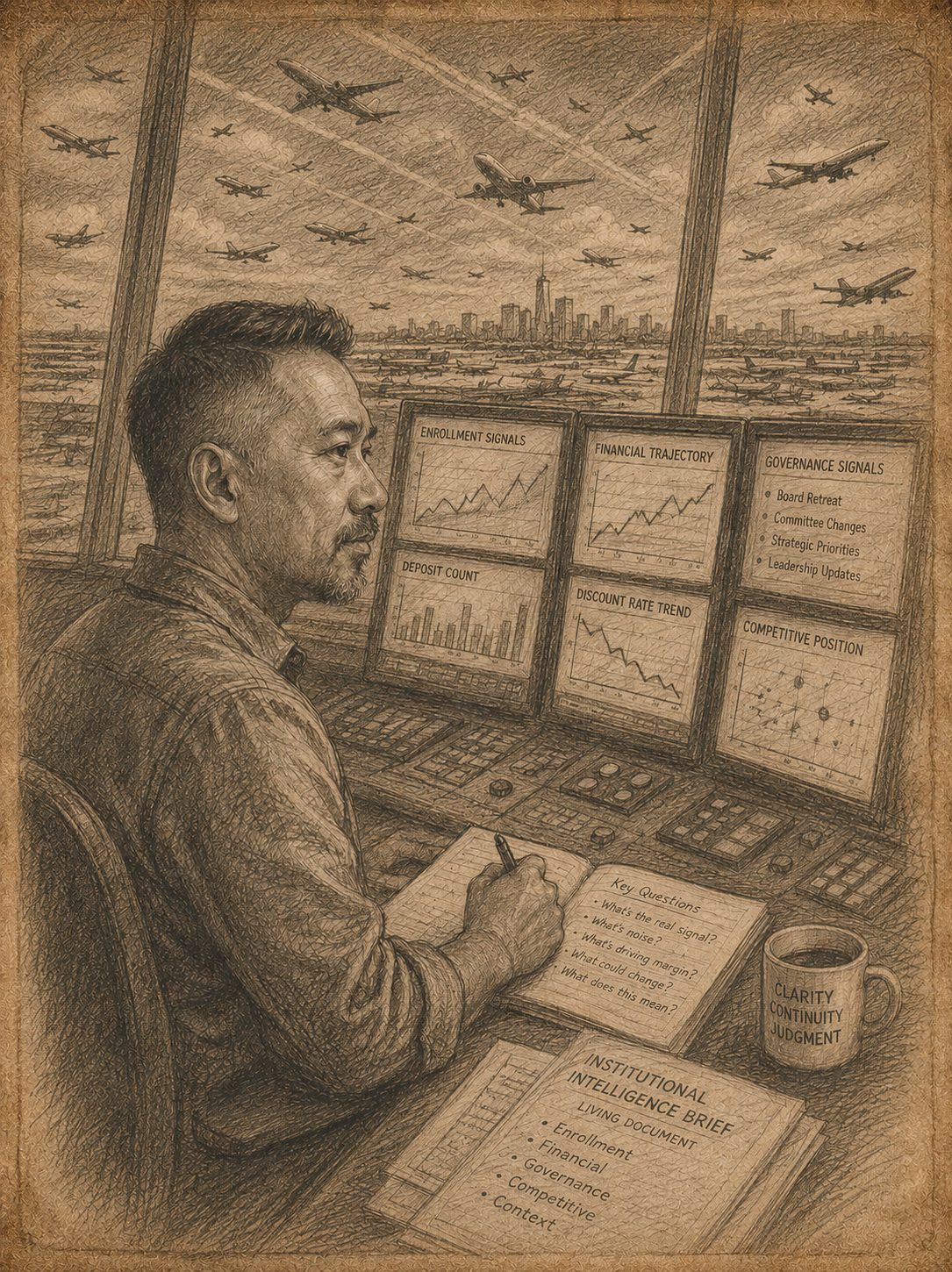

About eighteen months ago I started building what I now call a living institutional intelligence brief. Nobody asked me to build it. Nobody receives it. It exists entirely for my own use – a private document that holds the institution’s financial trajectory, enrollment signals, governance developments, and competitive position in one place I can return to, update, and think against. The alternative is carrying all of it in my head, which is fragile and, in a busy semester, unreliable.

What made it possible is AI used as a synthesis partner, not a report generator.

Most IR applications of AI I have seen are about production – faster drafting, cleaner formatting, quicker summarization. Useful, but it leaves the interpretation problem untouched. Someone still has to hold the full institutional picture simultaneously: discount rate trend alongside deposit count alongside governance signal alongside competitive position. In a three-person shop, that person is me. Until recently, it lived mostly in my head.

When I started using AI as a reasoning partner for this work, the synthesis became something I could write down and return to. I could bring financial data, enrollment signals, and a set of questions into a working session and come out with an interpretation better than what I would have reached alone – not because the AI knew things I did not, but because it could hold more threads at once while I focused on the judgment. Which signals are noise. What an enrollment volume gain actually means when margin is moving the other direction. Whether a governance shift changes how you read an operational pattern.

The document that came out of this is not an AI product. It is mine. The AI did not write the conclusions. It helped me get to them faster, and it helped me keep the thread across weeks of returning to a document that would otherwise require reconstructing context from scratch each time.

What this produces is not a better deliverable. It is a better version of me walking into the room. The brief never goes to the cabinet. But the cabinet gets an IR director who already knows what they think, who has already processed that week’s signals, who is not constructing the interpretation on the fly during a fast conversation. That is harder to measure than a report and easier to underestimate.

The field has spent a decade debating whether AI will replace institutional researchers. That debate has distracted from a more pressing question: whether IR professionals will build the interpretive capacity that institutions running on thin margins and incomplete information actually need. Not to produce better outputs, but to think more clearly under conditions that do not reward slowness. The data will not interpret itself. The meetings will not wait. The IR director who walks in prepared is not doing a better version of the old job. They are doing something different.